January 09, 2023

EMM - creating a coherent, updated map.

The Environment Mapping Module (EMM) is creating georeferenced aligned 3D updated maps of outdoor and indoor environments with sensors on board Unmanned Vehicles (UVs).

What is EMM?

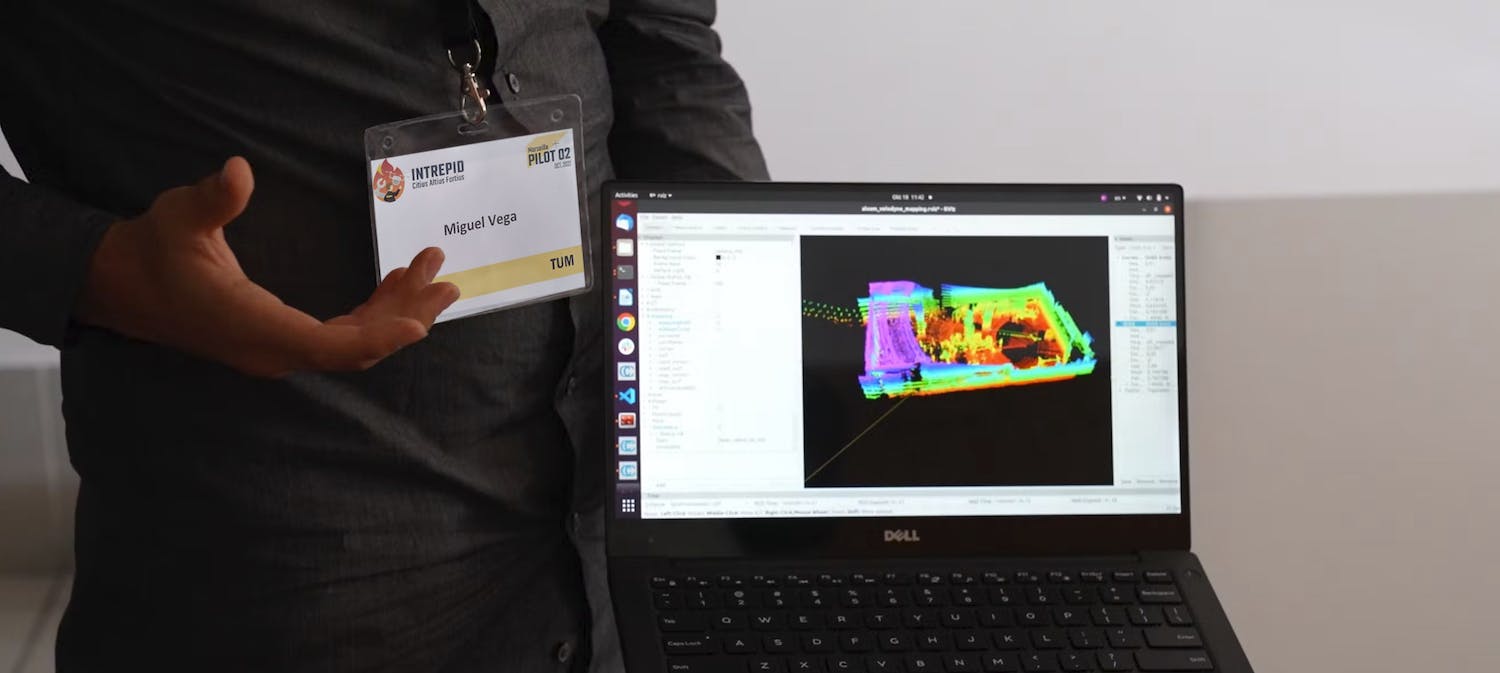

In a few words, EMM, or Environment Mapping Module, creates 3D maps of outdoor and indoor environments using sensors mounted on UxVs. Miguel A. Vega Torres from the Chair of Computational Modeling and Simulation at the Technical University of Munich (TUM) is in charge of this module in INTREPID.

Since global positioning systems (GPS) are usually not reliable in indoor environments, one usually relies on other sensors on the robot to know its position.

The EMM leverages prior information, for example, 3D building information models (BIM Models) or 2D floor plans to localize the robot accurately in indoor and outdoor environments.

With accurate position information, the EMM can then create an updated map of the environment, which is also aligned with the prior information.

The EMM is a back-end module, which means that it is something that the users don't see but that works behind the scenes and provides content that end users will eventually see on the interface.

How it works

EMM receives information from sensors on the robots. For example, what is received from the LiDAR sensor over the unmanned ground vehicle (UGV) is a bunch of points created after a sensor scan in one position.

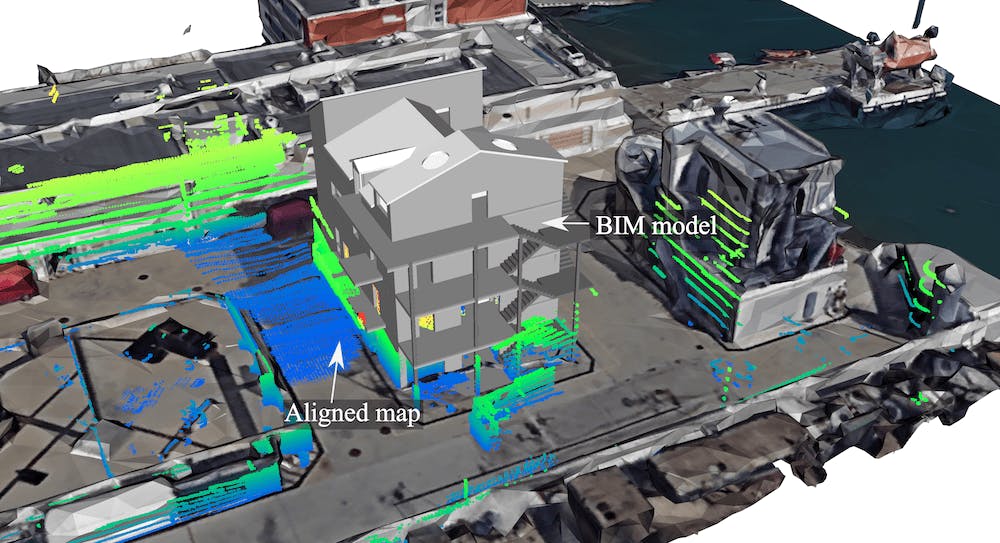

EMM collects this information and tries to match it with the prior map, for instance, with the BIM model. In this way, EMM can track the robot's position and orientation while it moves through the environment.

Difficulties occur when there are many deviations between the real environment and the model. In the BIM model, there are usually only walls, doors, and windows; however, there is no exact position of the furniture or all the elements in the environment, which creates discrepancies between what the robot sees and what there is in the reference model.

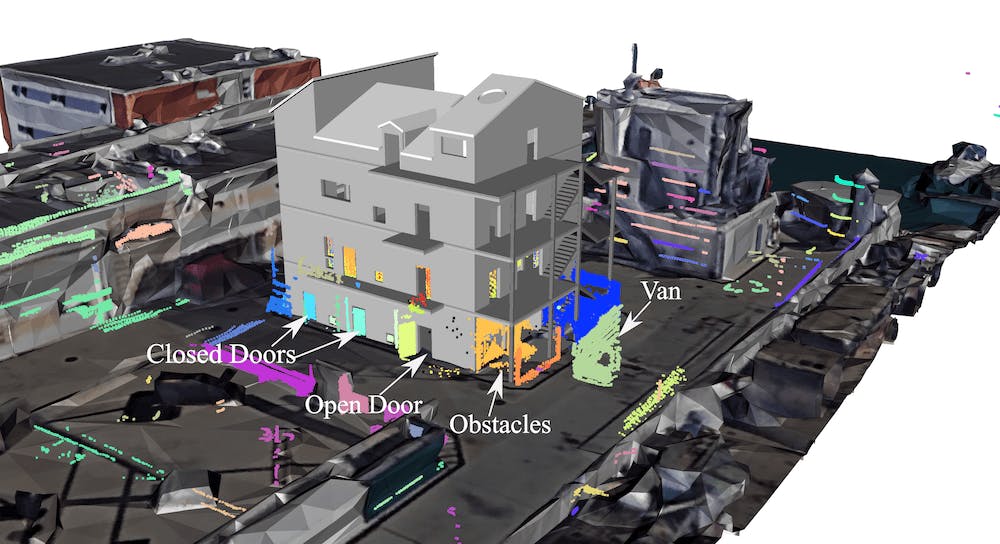

All the elements that aren't present in the model make the alignment difficult. Not only furniture but also dynamic elements like moving people and objects that aren't present in the model will affect the localization accuracy.

Moreover, in the future, the idea is to be able to map the current state of the environment, which may be very different from the as-planned status of the building.

With an updated map, we can not only keep track of the robot's trajectory but also, for example, localize the victims accurately. More importantly, we can give an overview to the first responders of the current situation, increasing situational awareness and enabling fast decision-making.

Aligned 3D map created by the EMM during pilot 2.

Why EMM?

The two main motivational aspects behind the EMM in INTREPID are:

- Rapidly increase situational awareness in disaster areas without risking the lives of first responders.

- The accurate, up-to-date 3D map will support decision-making and autonomous robotic tasks.

Goals

To resume, the EMM has three main goals:

- Use prior reliable information to improve the localization and mapping accuracy in challenging indoor and outdoor environments.

- Create an aligned updated 3D map with the correct georeferentiation so that it can be displayed in a general map with semantic information at the building and city level.

- Detect changes between the acquired data and the reference map.

With such a pipeline, the end goal of the EMM in the INTREPID project is to gather different sources of information from different robots and put them in a single georeferenced map.

Moreover, since the EMM also calculates the transformation from the global coordinate system to the local BIM coordinate system, it enables the exploitation of semantic BIM models for high-level autonomous robotic tasks, like autonomous navigation and recurrent inspection.

Additional Contributions to INTREPID

In INTREPID, the EMM, together with the Path Planning Module (PPM) and, in general, TUM, as part of the consortium, also contributes to creating 3D BIM models from architectural floor plans for the pilots. The pilots are the scenarios where the different capabilities of the numerous INTREPID modules are validated.

While for Pilot 1, the environment was a large metro station in Stockholm, for Pilot 2, it was a multi-story building designed for firefighters' training purposes in Marseille.

Both models were created with a high level of detail, considering indoor spaces, and with all the needed semantics for the other modules, e.g., for the Path Planning Module.

BIM model for Pilot 1.

BIM model for Pilot 2.

Next steps

The future focus of the EMM can be summarized in the following three points.

- If the robot moves fast, the degradation of the position accuracy can be pretty significant. Therefore, we would like to exploit also of information from the inertial measurement unit (IMU) sensors on the robots to improve localization accuracy.

- Once an aligned map is successfully created, this should also allow the update of the current map, not only considering the new elements (positive differences) but also the removed elements (negative differences).

- So far, the main focus has been placed on LiDAR information. However, in the future, we also would like to consider camera information to improve pose estimation (localization) and give realistic color to the final aligned maps.

The EMM identified new objects in the 3D map during Pilot 2.

Pilot 2 Bim in the 3D map.

Want access to exclusive INTREPID content? 💌 Subscribe to our newsletter! 💌