July 20, 2021

The INTREPID approach for extended reality

Post-disaster rescue operations are hazardous by nature, carried out in very uncertain, unknown environments, all the while relying solely on the perception of First Responders. Gathering and sharing information is done at a high risk.

The INTREPID project intends to increase the data available to FRs dramatically, in the form of sensor data, positioning information or alerts and recommendations. This wealth of information, comes with its own challenge though: how to display and share it quickly and efficiently, in a context where every minute counts and lives are at stake?

INTREPID has chosen eXtended Reality (XR) as an innovative way to display information for increased situational awareness. XR covers three potential modes: Virtual Reality (VR), Augmented Reality (AR) and Mixed Reality (MR).

Virtual Reality (VR): the full immersive solution

VR is well known by now, thanks to the existence of various levels of consumer-grade products, from the Google Cardboard to UHD Pimax helmets. It immerses the users in a fully virtual world, and allows them to interact with it.

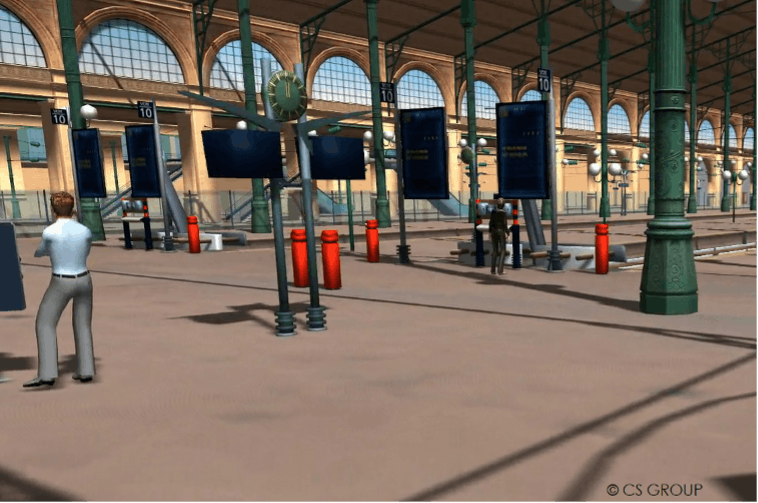

The INTREPID platform, through its Digital Mock-Up module, gathers not only geographical data and existing 3D models, but also updated 3D representations of the area, extracted from real-time data acquired by INTREPID drones and robots (Environment Mapping Module) as well as data from on-site sensors and cameras. This allows for a life-size, real-time representation of the disaster area.

Figure 1: VR view includes existing geospatial information and models, and real-time data from UxV and sensors.

When in operation, however, VR cuts the user from his or her surroundings. It can thus have an impact on the workflow in the command centre. An extensive use can also lead to physical uneasiness (nausea, motion sickness). In training though, with an adapted curriculum, VR would allow the user to experience being there, without actually having to set up a mock incident. Even better, the virtual world is not limited to a representation of its physical counterpart. It can make invisible threats visible, and display additional information from the INTREPID system right where needed.

Augmented Reality (AR): the best of both worlds

AR consists in adding virtual elements provided by the system to the real world (seen either through a camera, or through the very eyes of the user).

It comes in all shapes and sizes, from the more mundane (playing Pokemon Go on a smartphone or trying on virtual glasses on a website) to the most serious, life-saving use cases (surgery assistance or assistance to First Responders). With such a wide array of possibilities, capabilities strongly depend on the device used, the context and the functionalities of the system as a whole.

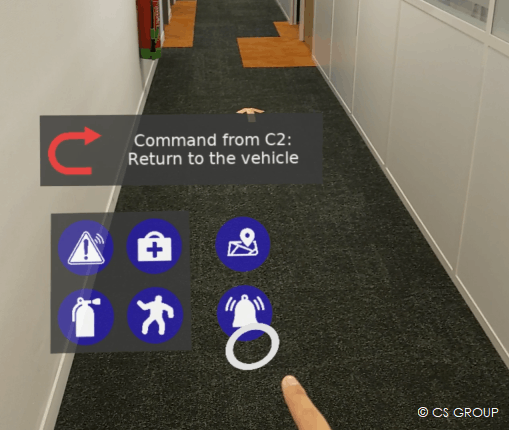

In the INTREPID project, solutions based on dedicated wearable devices (AR glasses) are favoured during the analysis phase, since they offer advanced display capabilities, and are able to closely intertwine visual metaphors with the real world without forcing the user to shift their gaze or use their hands.

Such devices would give the First Responders a global, rich understanding of their surroundings through: INTREPID sensor data (for instance, gas concentrations); data from other actors (for instance positions of the other team members); or even alerts and recommendations from the INTREPID Intelligence Amplification components (for instance, highlighting the path to the nearest victim, identifying a target area, providing alerts about nearby CBRN threats…). FRs immediately receive all the relevant information, and only the relevant information.

However, to obtain a seamless, efficient and unobtrusive integration of both worlds (real and virtual), positions and orientations should be determined precisely, not only for the situational data, but also for the AR device. Highly detectable “points of interest” can be used to track environmental features and extract knowledge about the position and orientation of the device. With INTREPID, though, the platform could be deployed anywhere and the presence of points of interest cannot be guaranteed. The AR mode would then rely on the system’s own real time indoor/outdoor positioning capabilities.

Figure 2: Hololens-wearing user receives navigation assistance (up) and alerts (rights) when exploring a building

Mixed Reality (MR): the whole situation in the palm of the hand

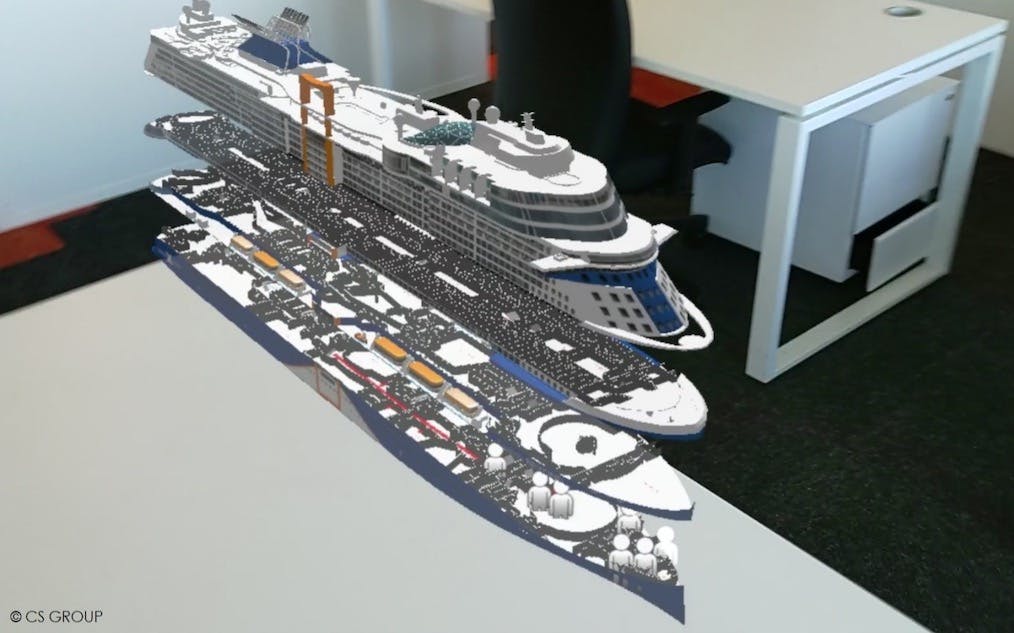

MR (Mixed Reality) can be considered as an advanced version of Augmented Reality. It also consists in bringing virtual elements into the real world. Yet, instead of displaying them as passive additions to the reality, the MR rendering takes into account the physical elements. It also allows the user to interact finely with the virtual components of the scene, blurring the limit between real and virtual.

This is embodied in INTREPID’s Holographic Common Operational Picture (Holographic COP). In this concept, a virtual representation of the disaster area is built and displayed for the connected users through AR devices, like a mock-up before their eyes. Like its physical, static cardboard counterpart, this scale model displays the 3D representations of the area, but its MR nature comes with unparalleled features, such as: navigation and manipulation at the snap of a finger, real-time situational information such as the position of actors or threats, regular updates throughout the crisis or the ability to go back and forth in time for an after-action review.

Figure 3: Hololens-wearing user viewing the COP when handling incidents on a cruise boat

Advanced visualisation components based on eXtended Reality come hand in hand with advanced situational awareness and decision-support capabilities. The vast amount of information, acquired or generated, allows a detailed, up-to-date and rich rendering of the situation. Conversely, such an amount of data requires visualisation options that go beyond a 2D computer screen. The XR modes considered in INTREPID offer multiple possibilities, and the various workshops organised in the scope of the project will help narrow down the better and most relevant uses to harness the system capabilities.

Article written by CS GROUP.

Want access to exclusive INTREPID content? 💌 Subscribe to our newsletter! 💌